This feature was long postponed because it seemed complicated to me. But with good GUI architecture and good IDE, it was much faster to implement than I estimated!! Estimated for 1 week work... done in 5 hours !

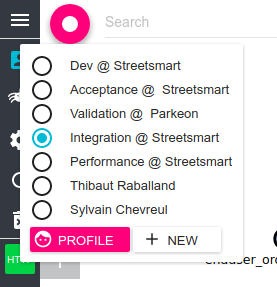

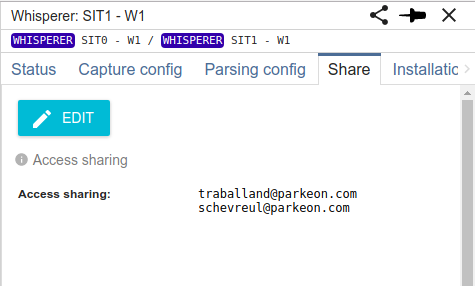

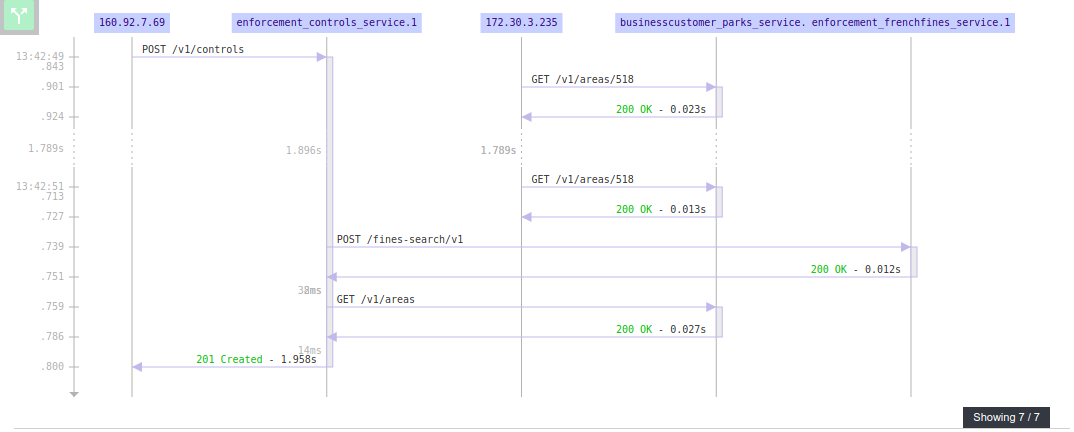

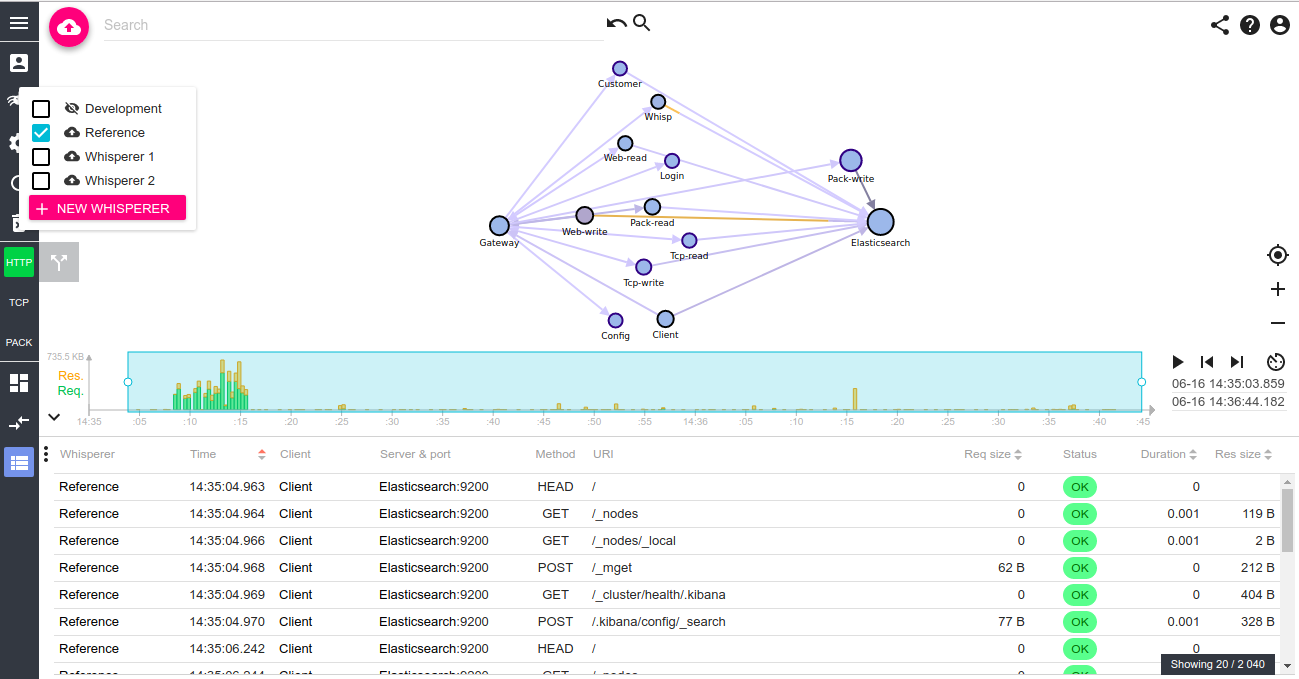

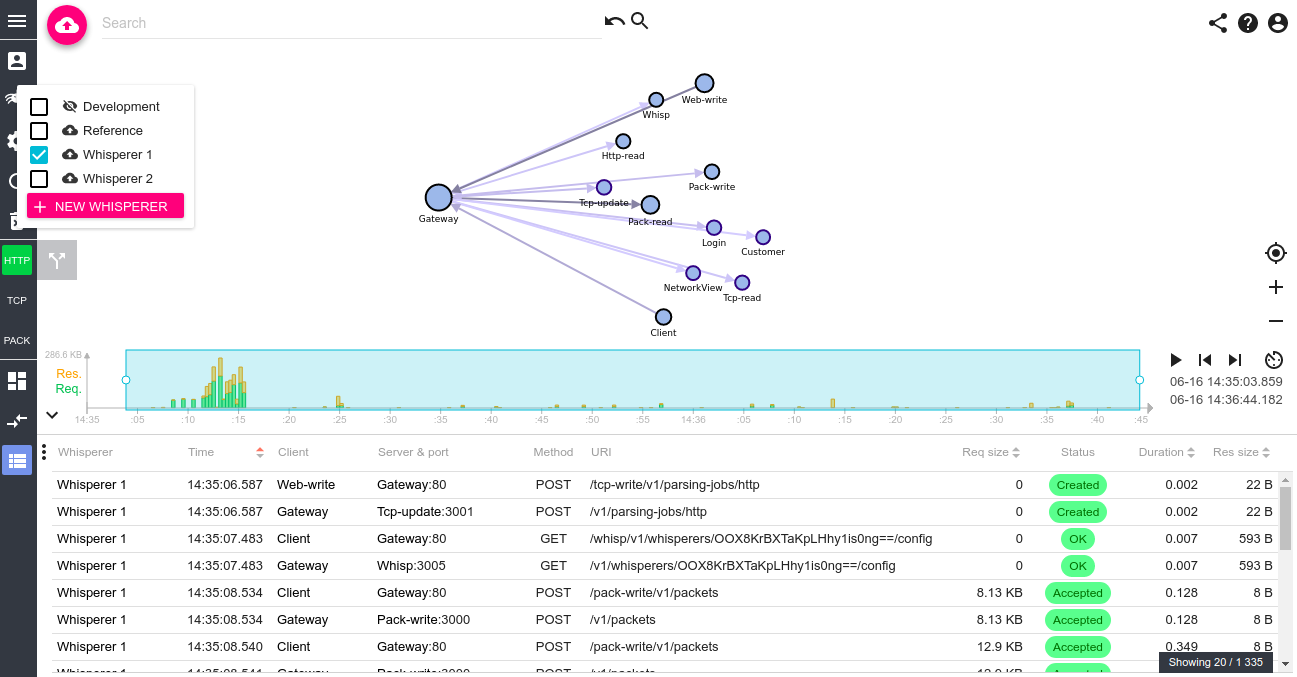

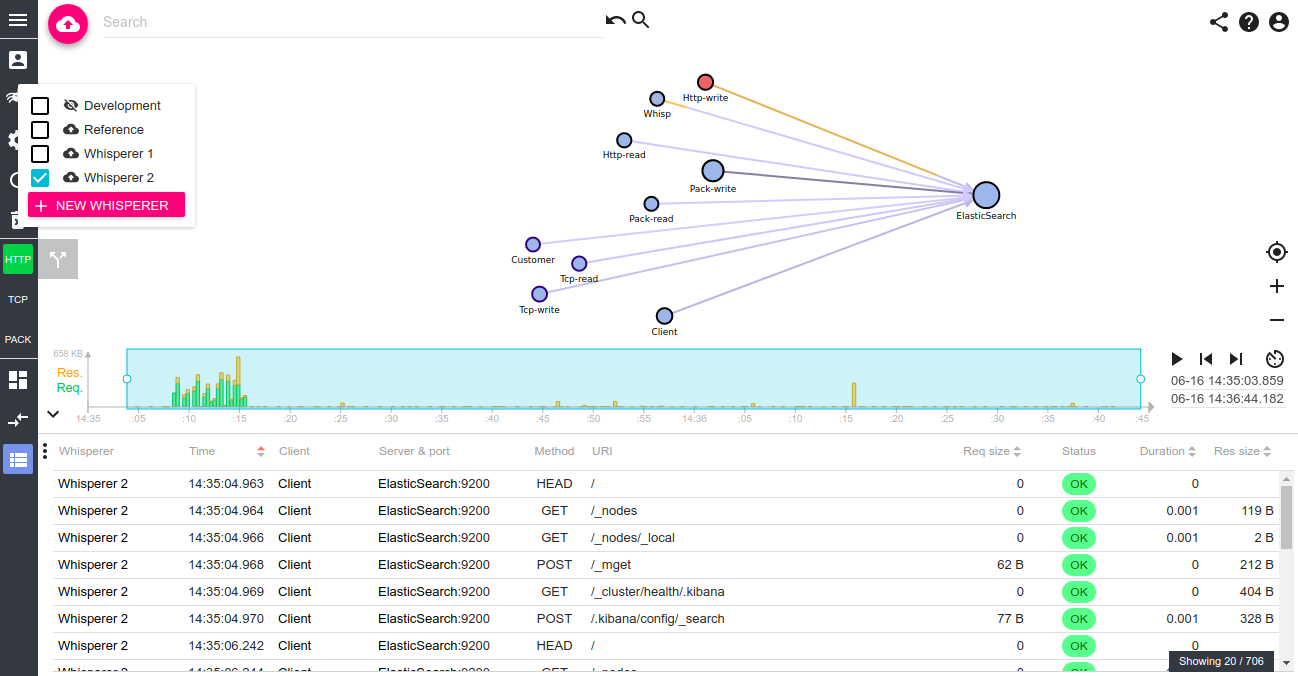

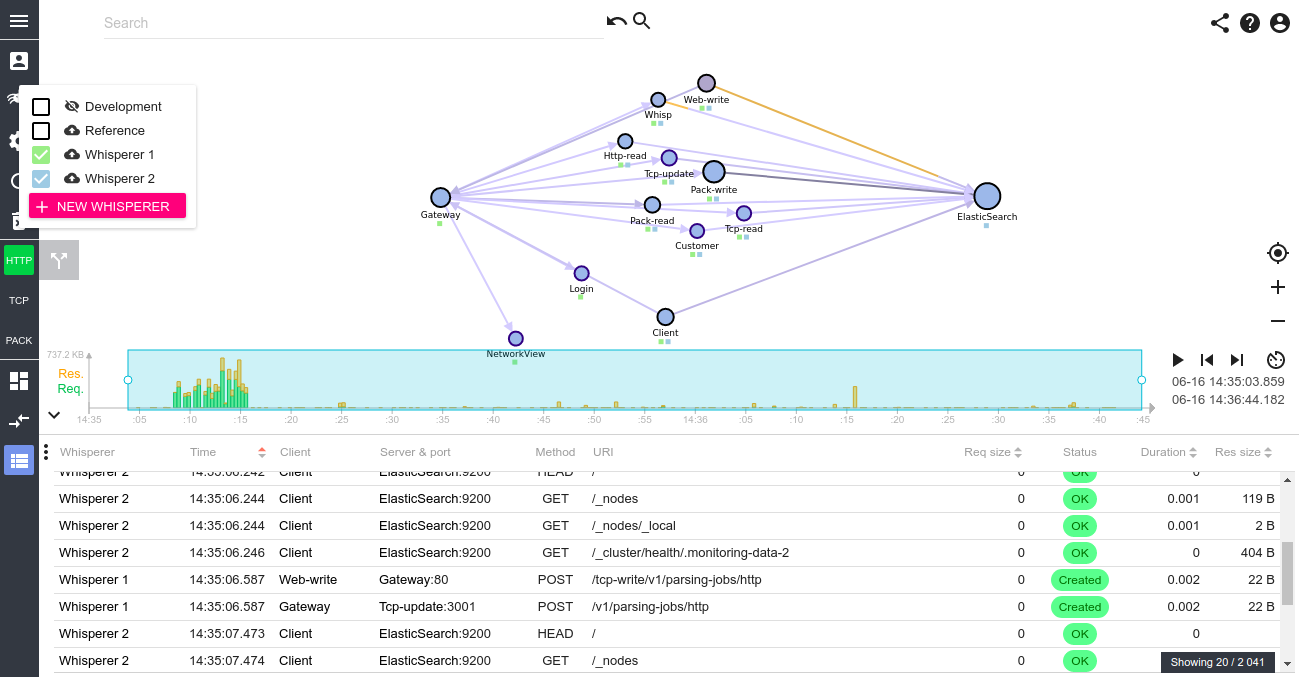

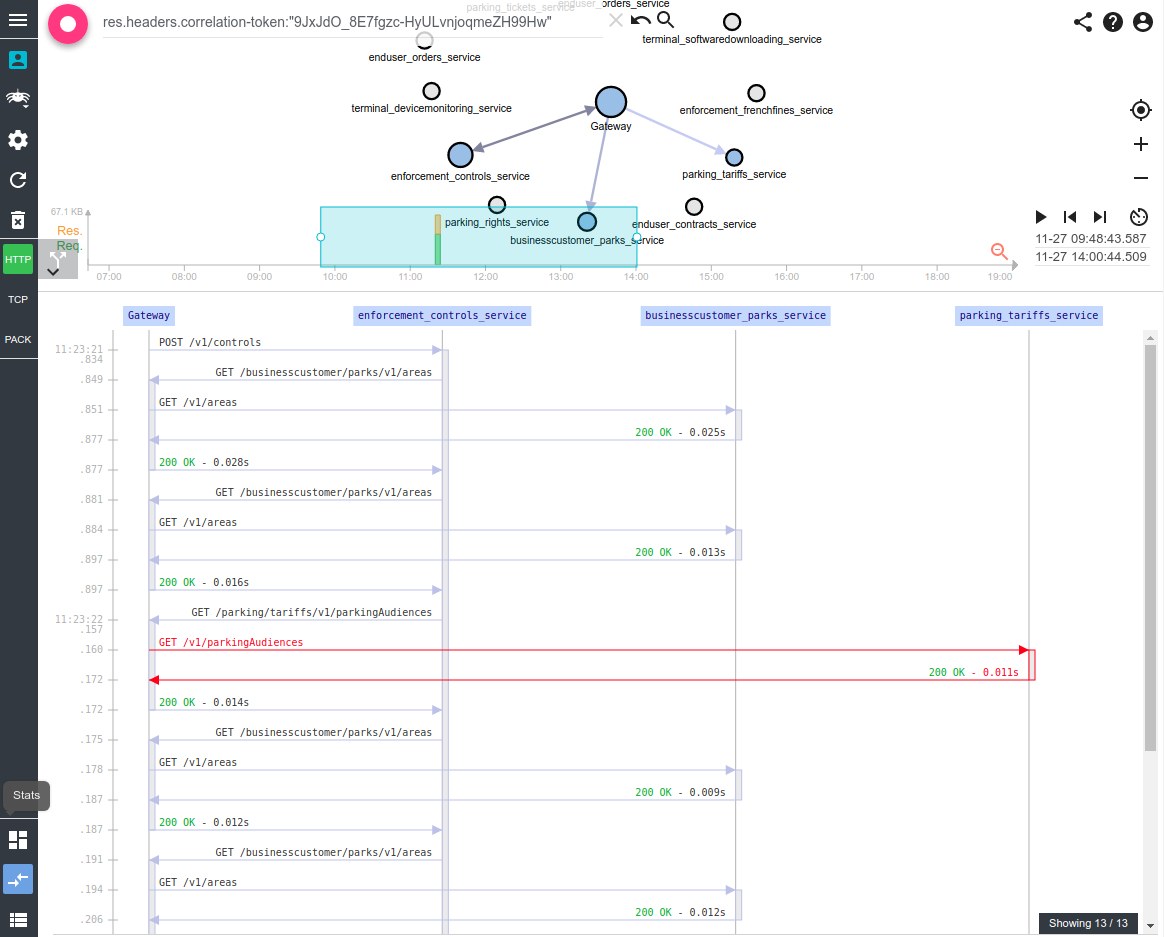

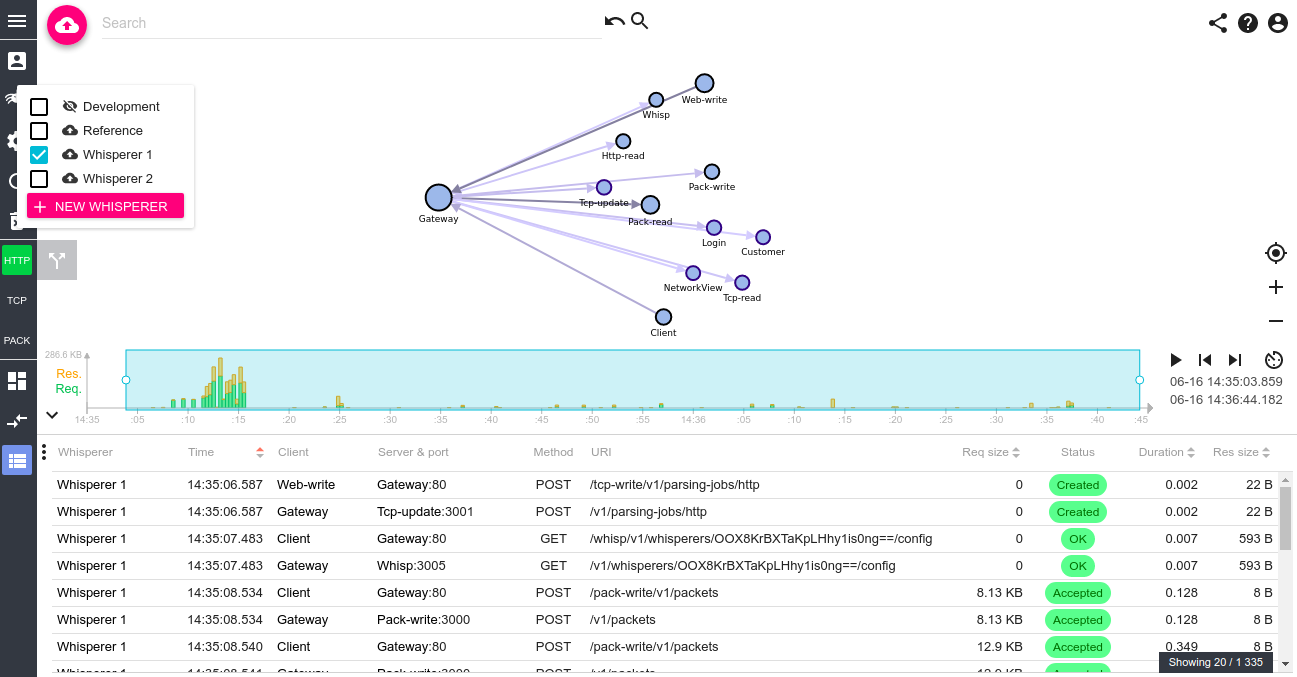

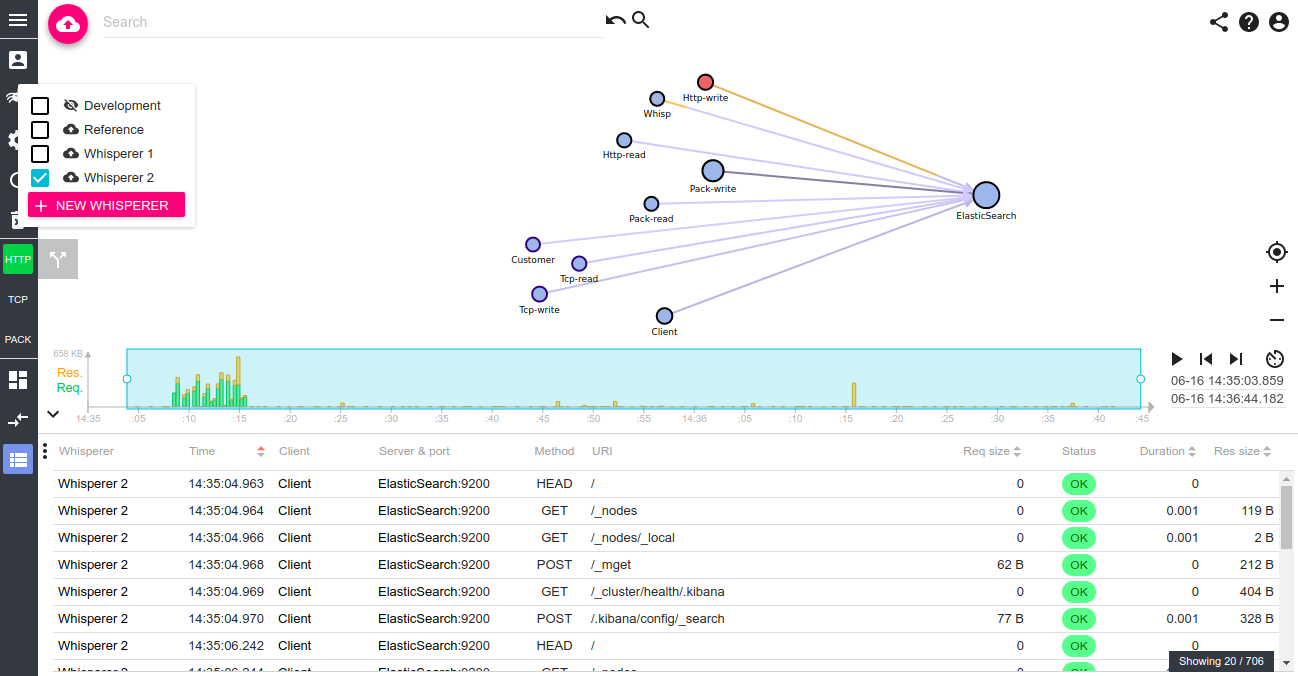

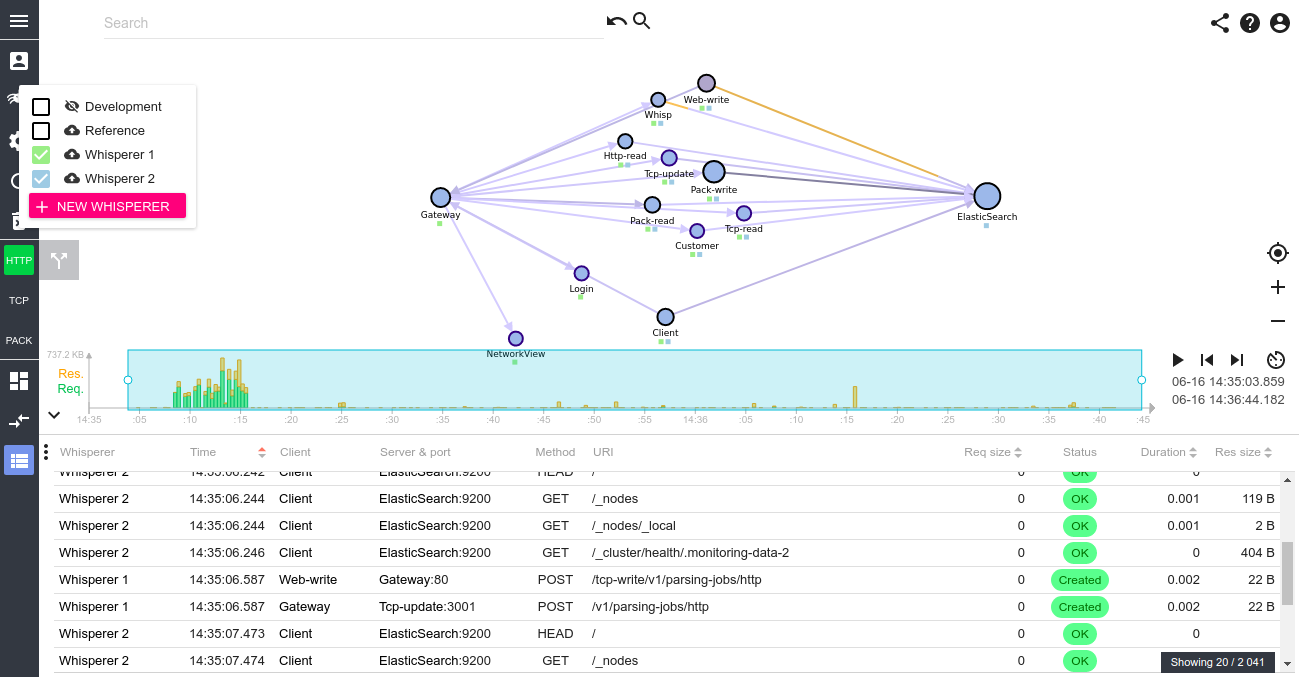

Spider can now display/search on several Whisperers at once!

By clicking on Shift/Ctrl, you can select several Whisperers at once, and combined their data in Network Map, Grid, Sequence diagram, and all the searches/filtering.

There may still be some bugs hanging around, but it seems to work fine :-) It is a bit slower to build the map because of the pre processing done in the browser, but you shouldn't see it much.

Now, I can tell Pawel that we can put several Gateways on SIT1 and co :) !! And I'm getting ready for complex systems!

Notice the small squares on the map that tells what nodes has been seen by what Whisperer?

Aren't they nice?