Improving GUI loading U/X

Hi,

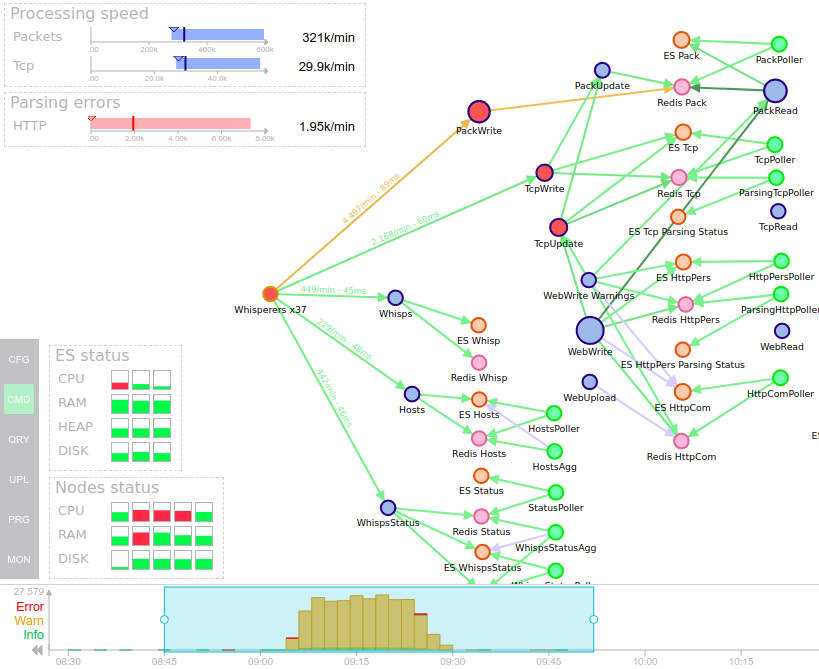

I recently spent a couple of hours to improve GUI loading U/X. First queries were long when no zoom on timeline was active.

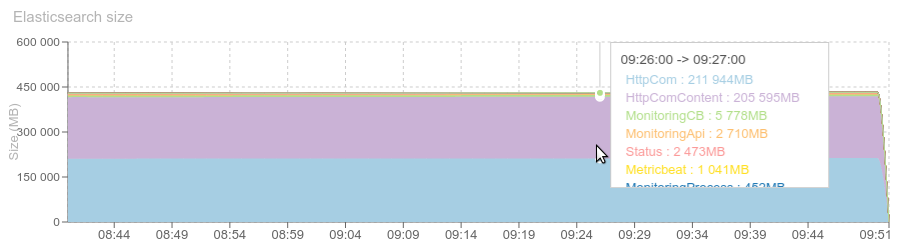

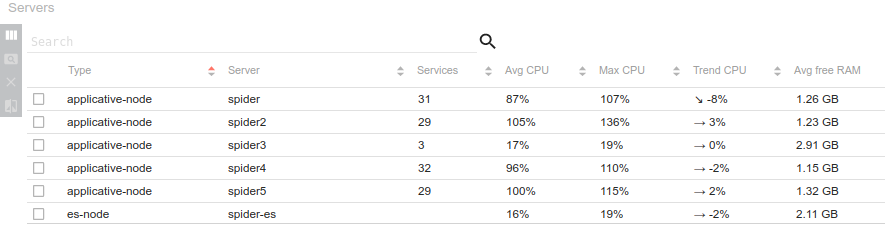

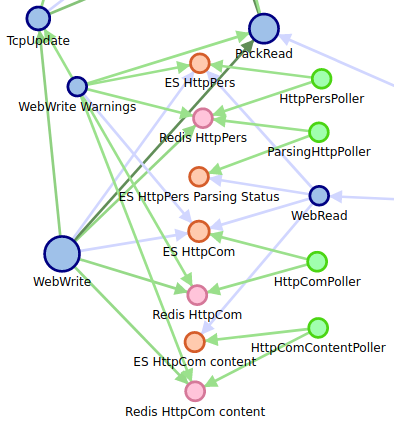

I thought this was mostly related to the amount of data Elasticsearch had to load to do its queries, because the HTTP content is stored in the same index as all the metadata.

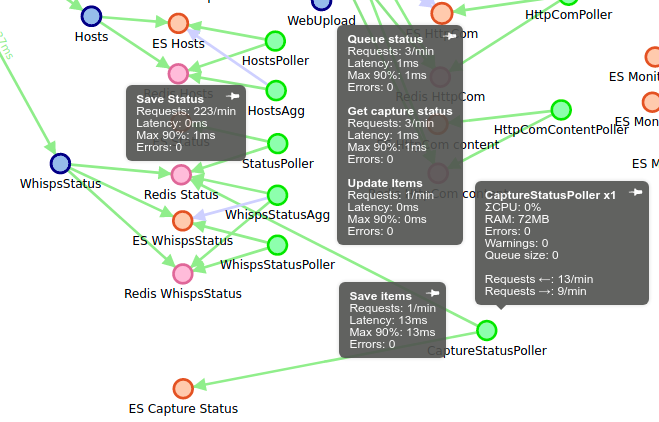

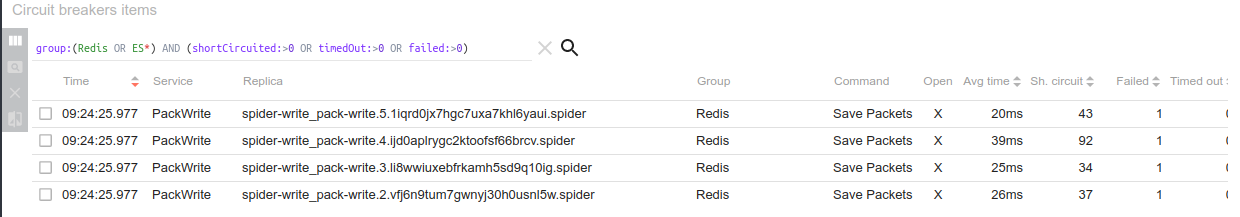

So I performed a split of this index, and now metadata and content are stored separately, and merged only when requesting a unique HTTP communication, or when exporting.

I divided the size of the index by almost 2, and cost me only one new poller and 2 new indices in the architecture.

I divided the size of the index by almost 2, and cost me only one new poller and 2 new indices in the architecture.

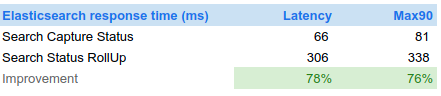

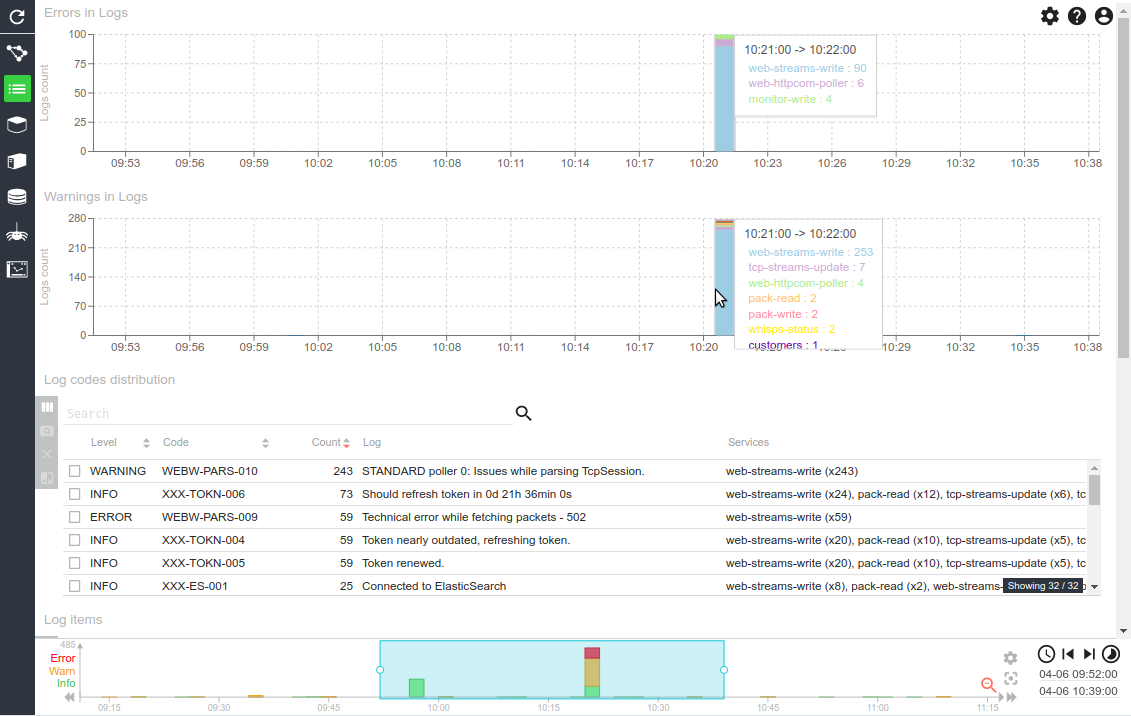

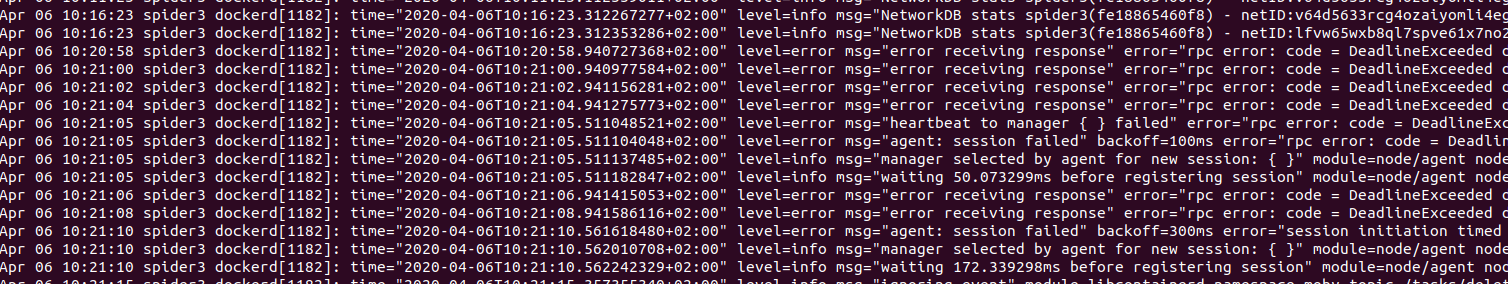

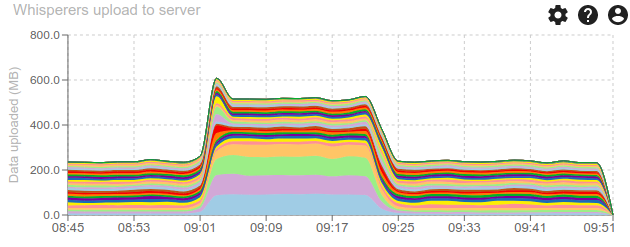

The changes were good: no more timeout on page loading, but still, it took 11s for 7 days of SIT1...

So, I looked closer

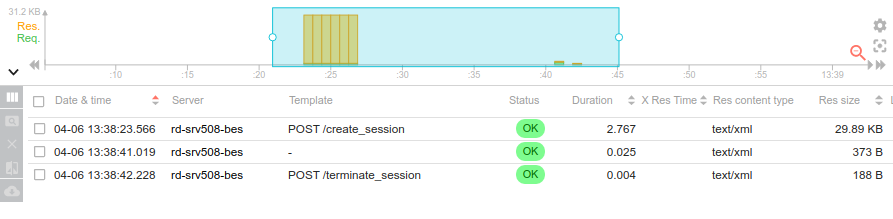

Step2 - Avoiding the duplicated load

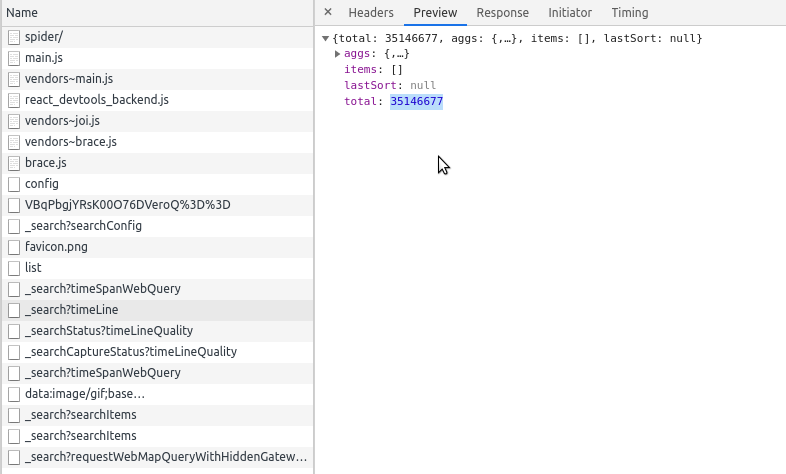

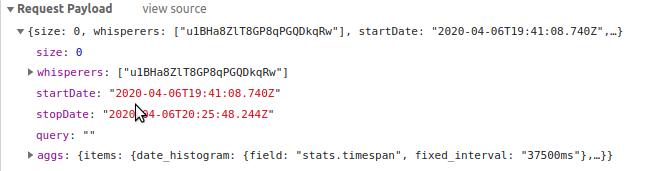

In fact, when loading the UI without zoom, the search queries for the timeline were executed twice !

It took me a while to figure out. I did some refactoring, solved 3 places that could cause the issue, one being in the timeline itself. And it is fixed :) !!

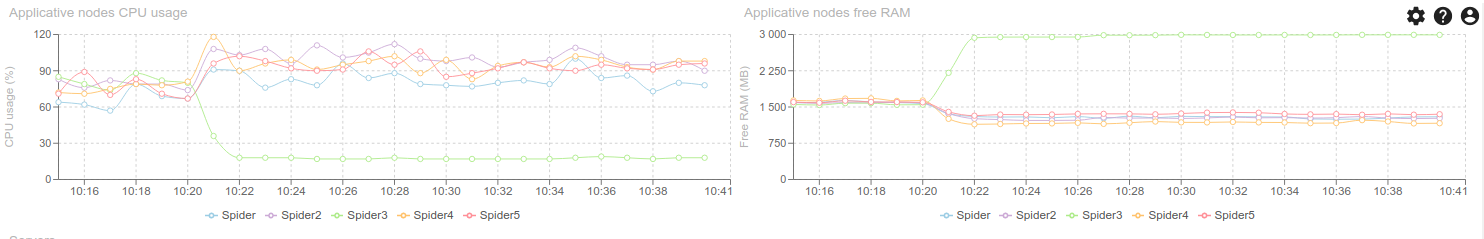

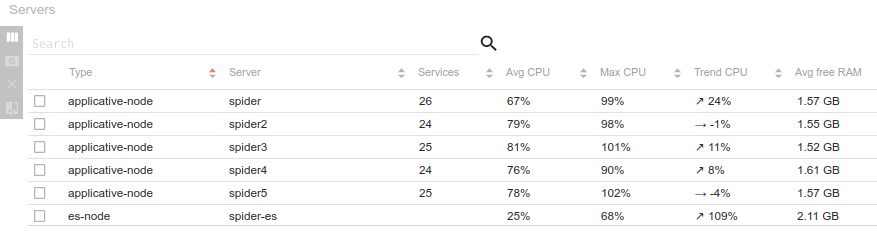

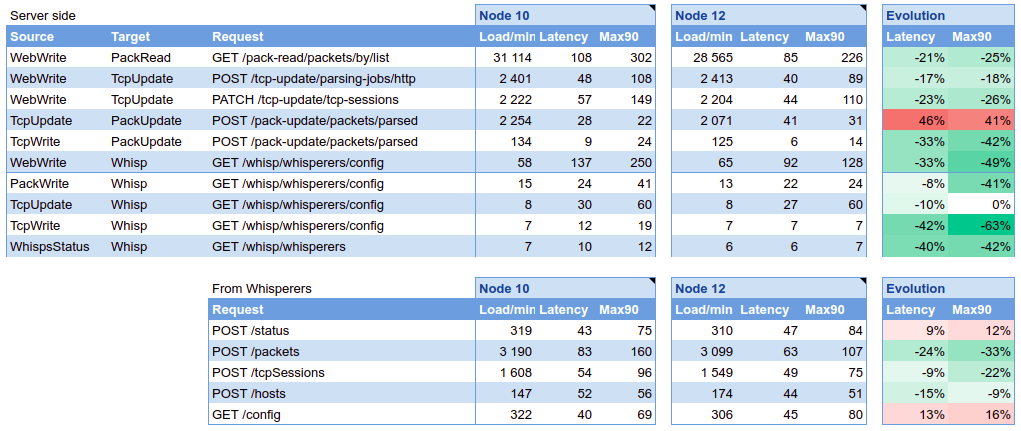

The number of queries to load the page has been reduced, and the speed is much better! From 11s, we down to 4-6s. It is still important, but considering that it is doing its aggregations in parallel to more than 350 millions records for 7 days of SIT1... it is great :oO Especially on a 2 cores AWS M5 server.